Your Production Code Is Training AI Models Right Now (And How to Audit Your Stack)

Every AI coding tool you use needs access to your code to function. Copilot reads your files for completions. Cursor indexes your project for context. LangChain traces log your prompts and outputs ...

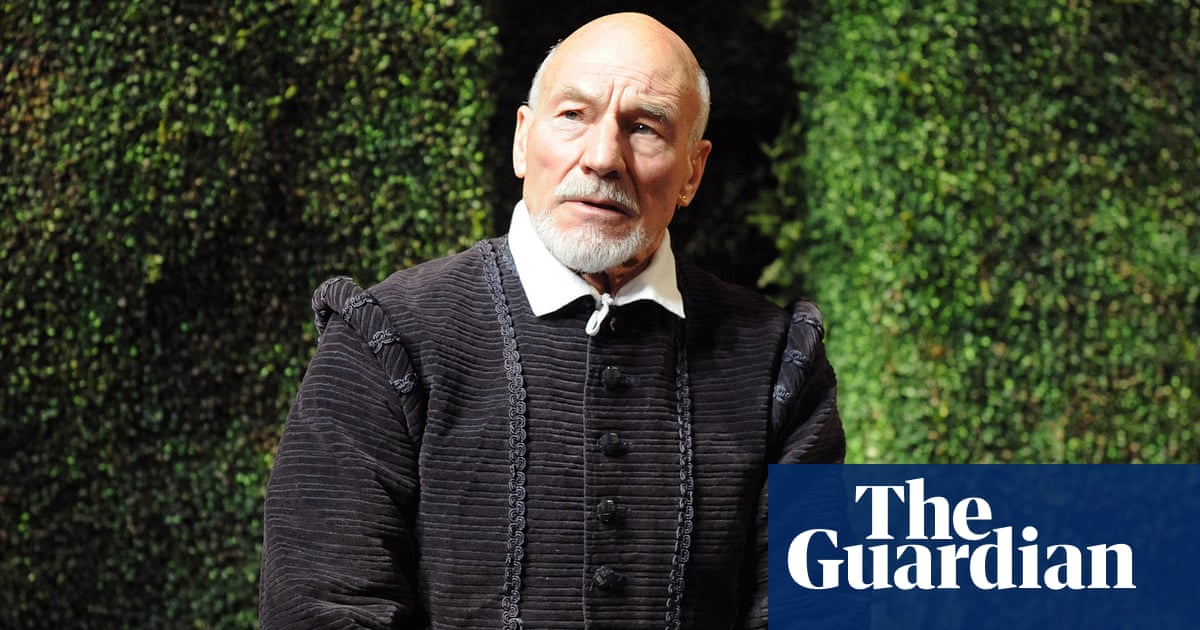

Source: DEV Community

Every AI coding tool you use needs access to your code to function. Copilot reads your files for completions. Cursor indexes your project for context. LangChain traces log your prompts and outputs for observability. The problem is not that these tools access your code. The problem is that most engineers never ask what happens to that code after the tool processes it. Where does the telemetry go? Who trains on it? Is your proprietary logic ending up in a foundation model's training set? This week, GitHub's decision to opt all users into AI model training by default made this question impossible to ignore. But GitHub is not the only platform doing this. It is the default pattern across the entire AI tooling stack. The Default Is Always "Opt In" Here is how it works at almost every AI tool company: ship the feature, opt everyone in, bury the toggle three levels deep in settings, and wait for someone to notice. GitHub opted users into training data collection. The setting is under Settings